LINK

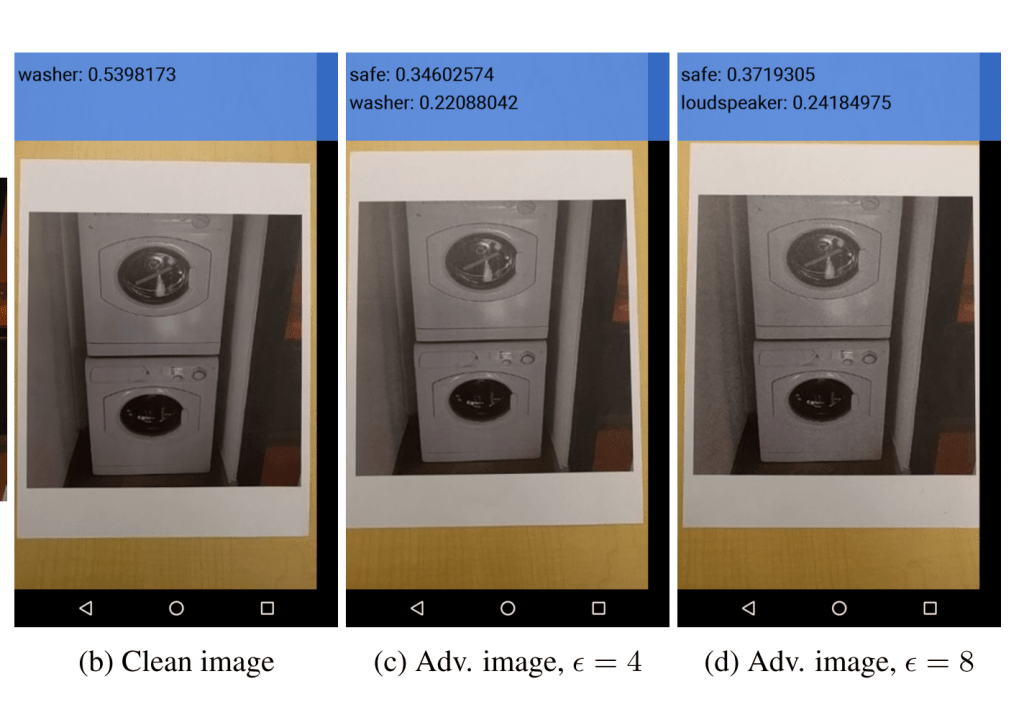

うまくノイズを設計することで、元画像に人にはほとんど気づかれないほどのノイズを加えるだけで、全く別のものとしてCNNに認識されてしまうことが以前から指摘されている.

この論文とデモは、こうしたAdversarial(敵対的)なサンプルが、コンピュータ内だけでなく実世界でも起きることを実証している. 今後、実社会のなかでAIによる画像認識がさまざまな場面で使われていくことになるだろうことを考えると、この研究の示唆は大きい.

たとえば、SNS上のプライバシーを守るために、友人の目にはわからないくらいのノイズで画像認識のエンジンをあざむくフィルタがあっても面白い. 今度こうしたいたちごっこが生まれそう.

arXiv(2016.07.08公開)Most existing machine learning classifiers are highly vulnerable to adversarial examples. An adversarial example is a sample of input data which has been modified very slightly in a way that is intended to cause a machine learning classifier to misclassify it. In many cases, these modifications can be so subtle that a human observer does not even notice the modification at all, yet the classifier still makes a mistake. Adversarial examples pose security concerns because they could be used to perform an attack on machine learning systems, even if the adversary has no access to the underlying model. Up to now, all previous work have assumed a threat model in which the adversary can feed data directly into the machine learning classifier. This is not always the case for systems operating in the physical world, for example those which are using signals from cameras and other sensors as an input. This paper shows that even in such physical world scenarios, machine learning systems are vulnerable to adversarial examples. We demonstrate this by feeding adversarial images obtained from cell-phone camera to an ImageNet Inception classifier and measuring the classification accuracy of the system. We find that a large fraction of adversarial examples are classified incorrectly even when perceived through the camera.